Revisiting Algorithmic Progress

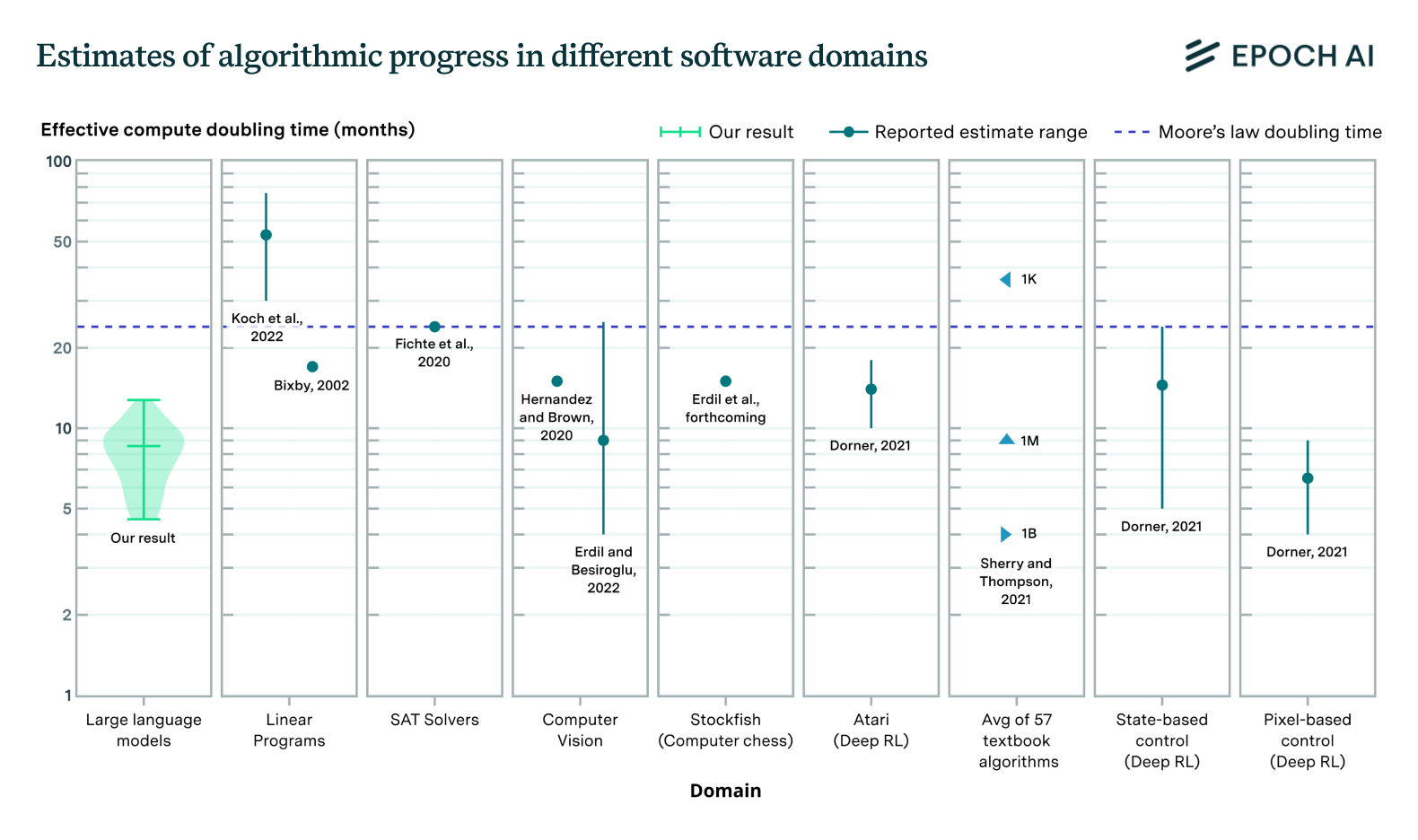

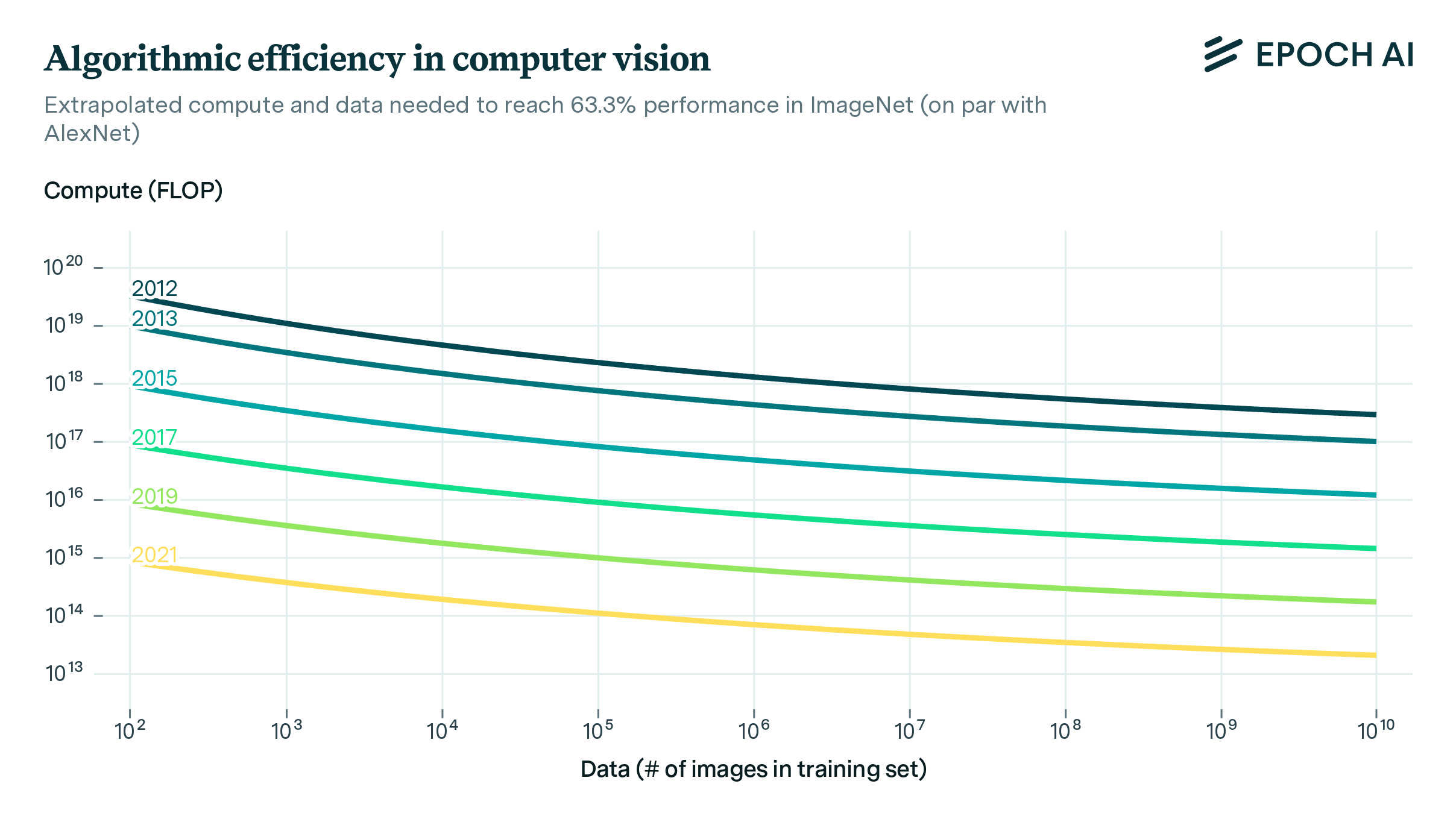

We use a dataset of over a hundred computer vision models from the last decade to investigate how better algorithms and architectures have enabled researchers to use compute and data more efficiently. We find that every 9 months, the introduction of better algorithms contribute the equivalent of a doubling of compute budgets.

Published

Resources

Overview

How much progress in ML depends on algorithmic progress, scaling compute, or scaling relevant datasets is relatively poorly understood. In our paper, we make progress on this question by investigating algorithmic progress in image classification on ImageNet, perhaps the most well-known test bed for computer vision.

Using a dataset of a hundred computer vision models, we estimate a model—informed by neural scaling laws—that enables us to analyse the rate and nature of algorithmic advances. We use Shapley values to produce decompositions of the various drivers of progress computer vision and estimate the relative importance of algorithms, compute, and data.

Our main results include:

- Every nine months, the introduction of better algorithms contributes the equivalent of a doubling of compute budgets. This is much faster than the gains from Moore’s law; that said, there’s uncertainty (our 95% CI spans 4 to 25 months)

- Roughly, progress in image classification has been ~45% due to the scaling of compute, ~45% due to better algorithms, ~10% due to scaling data

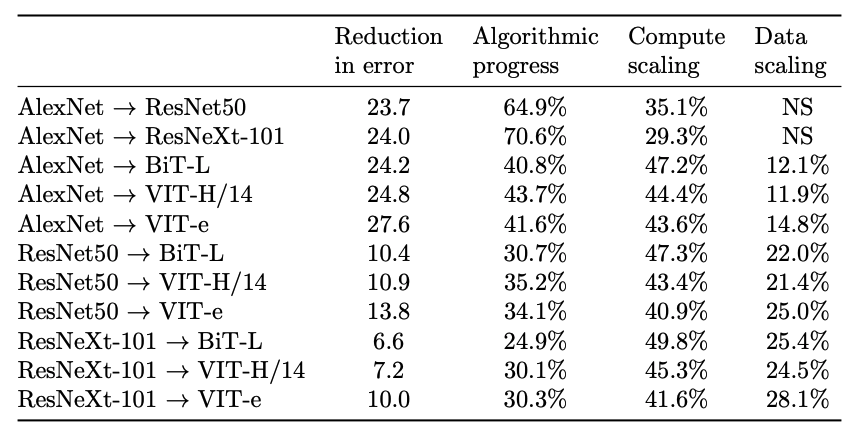

Attribution of progress to algorithmic progress, compute scaling and data scaling between model pairs based on Shapley decomposition. “NS” indicates that there was no scaling of the relevant input between these models. Numbers may not all add up to 100 due to rounding.

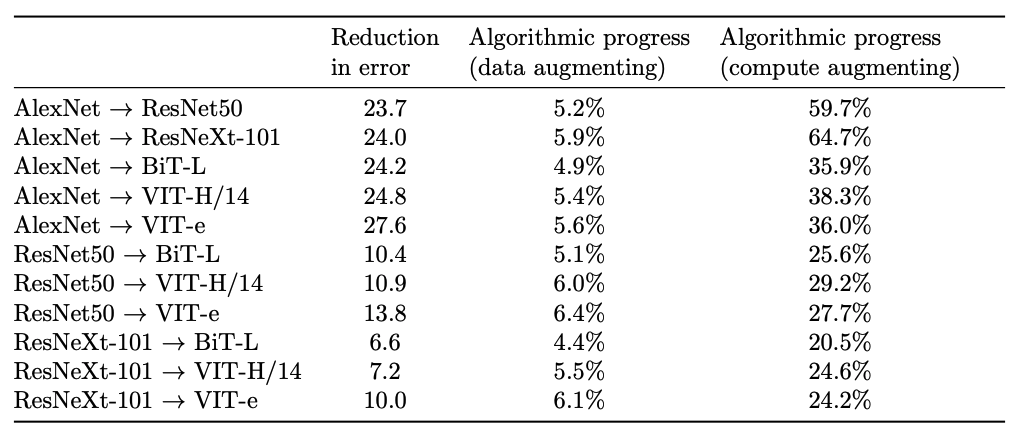

- The majority (>75%) of algorithmic progress is compute-augmenting (i.e. enabling researchers to use compute more effectively), a minority of it is data-augmenting

Shares of algorithmic progress that is compute- vs. data-augmenting.

In our work, we revisit a question previously investigated by Hernandez and Brown (2020), which had been discussed on LessWrong by Gwern, and Rohin Shah. Hernandez and Brown (2020) re-implement 15 open-source popular models and find a 44-fold reduction in the compute required to reach the same level of performance as AlexNet, indicating that algorithmic progress outpaces the original Moore’s law rate of improvement in hardware efficiency, doubling effective compute every 16 months.

A problem with their approach is that it is sensitive to the exact benchmark and threshold pair that one chooses. Choosing easier-to-achieve thresholds makes algorithmic improvements look less significant, as the scaling of compute easily brings early models within reach of such a threshold. By contrast, selecting harder-to-achieve thresholds makes it so that algorithmic improvements explain almost all of the performance gain. This is because early models might need arbitrary amounts of compute to achieve the performance of today’s state-of-the-art models. We show that the estimates of the pace of algorithmic progress with this approach might vary by around a factor of ten, depending on whether an easy or difficult threshold is chosen.1

Our work sheds new light on how algorithmic efficiency occurs, namely that it primarily operates through relaxing compute-bottlenecks rather than through relaxing data-bottlenecks. It further offers insight on how to use observational (rather than experimental) data to advance our understanding of algorithmic progress in ML.

-

That said, our estimates are consistent with Hernandez and Brown (2020)’s estimate that algorithmic progress doubles the amount of effective compute every 16 months, as our 95% confidence interval ranges from 4 to 25 months. ↩